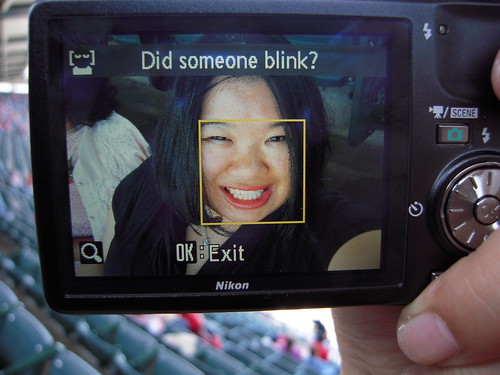

Consumers using webcams with face recognition and digicams with blink-alert have found themselves the victims of racial profiling. Or targeted ignoring-slash-teasing, as it were. Reports Time:

Wang, a Taiwanese-American strategy consultant who goes by the Web handle “jozjozjoz,” thought it was funny that the camera had difficulties figuring out when her family had their eyes open. So she posted a photo [above] of the blink warning on her blog under the title, “Racist Camera! No, I did not blink… I’m just Asian!” The post was picked up by Gizmodo and Boing Boing, and prompted at least one commenter to note, “You would think that Nikon, being a Japanese company, would have designed this with Asian eyes in mind.”

…

The principle behind face detection is relatively simple, even if the math involved can be complex. Most people have two eyes, eyebrows, a nose and lips – and an algorithm can be trained to look for those common features, or more specifically, their shadows. (For instance, when you take a normal image and heighten the contrast, eye sockets can look like two dark circles.) But even if face detection seems pretty straightforward, the execution isn’t always smooth.

Indeed, just last month, a white employee at an RV dealership in Texas posted a YouTube video showing a black co-worker trying to get the built-in webcam on an HP Pavilion laptop to detect his face and track his movements. The camera zoomed in on the white employee and panned to follow her, but whenever the black employee came into the frame, the webcam stopped dead in its tracks. “I think my blackness is interfering with the computer’s ability to follow me,” the black employee jokingly concludes in the video. “Hewlett-Packard computers are racist.”

Here’s that video. It’s pretty hilarious. Desi seems like a funny guy:

According to Time, “HP’s lead social-media strategist Tony Welch wrote on a company blog within a week of the video’s posting….The post linked to instructions on adjusting the camera settings, something both Consumer Reports and Laptop Magazine tested successfully in Web videos they put online.”

So that’s the easy answer right there. Don’t change the algorithms or the hardware, just add a step to the set-up process:

- Please select the ethnicity of the people you most often photograph/record:

- Caucasian (glarey, wide-eyed)

- Asian (squinty)

- Black (shadowy, evasive)

- Hispanic (kinda like a mix between Asian and Black)

And don’t pull any of that I-hang-out-with-people-of-all-colors shit. Do you want your pictures to come out well, or don’t you? Problem solved.